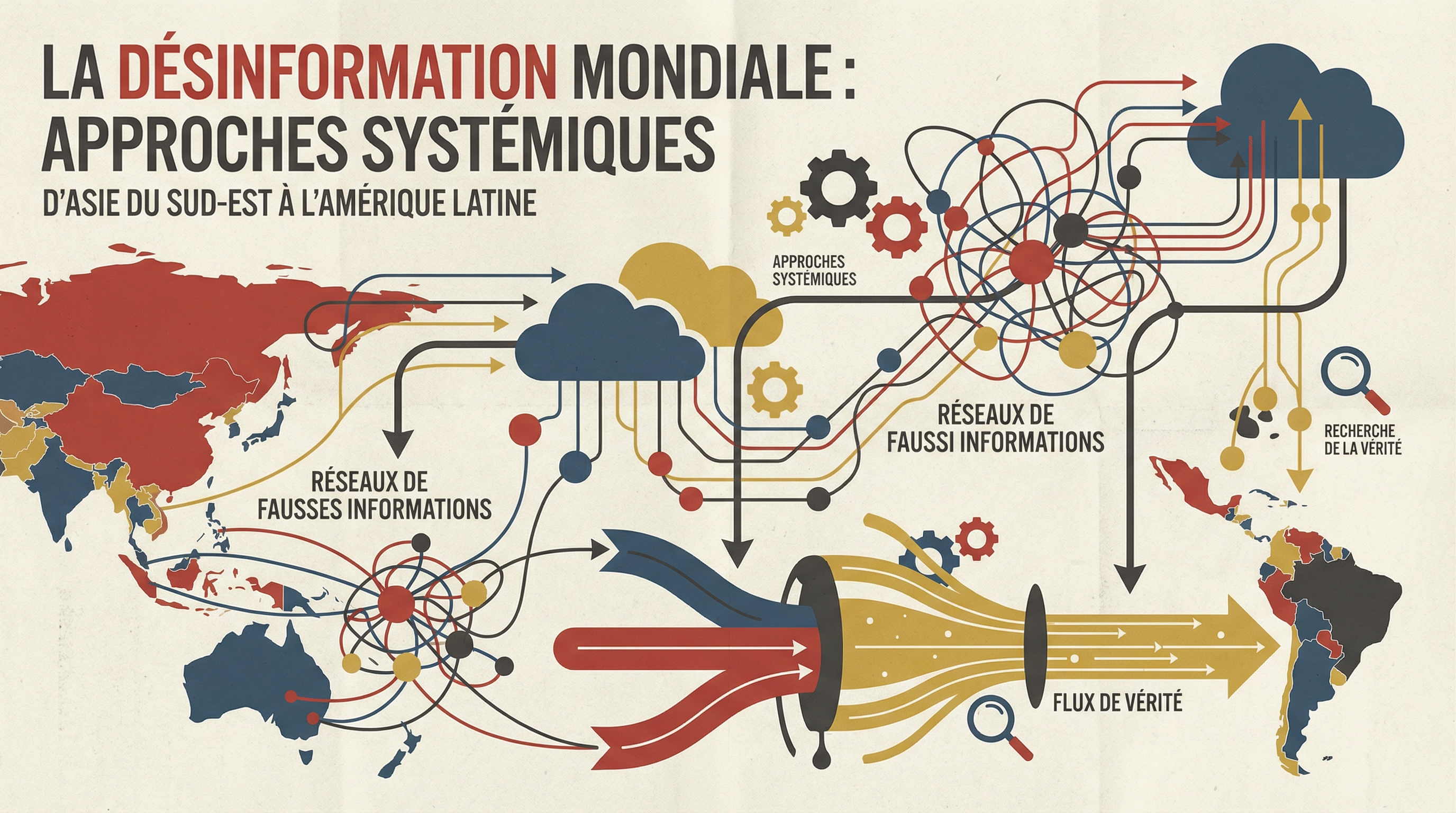

In 2026, 64% of Southeast Asian citizens regularly encounter online disinformation [1]. This figure, from regional reports, represents only one facet of a global phenomenon. The World Economic Forum identifies disinformation and misinformation as the second most severe global risk over a two-year horizon, and fifth over a ten-year horizon, just after climate threats and biodiversity loss [3]. The spread of false or manipulated content has become a structural characteristic of the digital information ecosystem, with a global economic cost estimated at $417 billion in 2025 by Sopra Steria, taking into account loss of trust, impact on financial markets, and cybersecurity costs.

Factual Context: A Complex Ecosystem with 3 Components

The speed and scale of disinformation are products of digital technologies. Three main mechanisms explain its propagation. First, social media platforms and their algorithms. Designed to maximize user engagement, they favor content that elicits strong emotions, whether true or false. This attention architecture rewards sensationalism and polarization, creating "echo chambers" where false narratives are amplified. Second, encrypted private messaging applications like WhatsApp or Telegram. They enable viral spread of content within closed and trusted groups, making verification and external intervention nearly impossible. In Brazil and India, WhatsApp was a major vector for political disinformation during recent elections. Third, the advent of generative artificial intelligence technologies. The creation of audio and video "deepfakes" and production of texts nearly indistinguishable from human output drastically lower the cost of creating large-scale disinformation, enabling personalized and automated influence operations. A vocal deepfake of the American president was used in 2024 to discourage participation in a primary election, illustrating the destabilization potential of these tools.

The actors in this ecosystem are varied. States like Russia or China use disinformation as a foreign policy weapon to sow discord in democracies or as a domestic control tool to suppress dissent. Political groups employ it to polarize public debate and mobilize their electoral base. Economic actors create "click farms" in the Philippines or North Macedonia, employing precarious workers to artificially amplify messages for commercial or political purposes. Finally, and this is a fundamental trend, ordinary citizens, acting out of conviction, relay false information. A Georgetown University study qualifies this phenomenon as "authentic disinformation" [1]. Countries like Brazil, India, Nigeria, and the Philippines have become documented examples of these strategies' impact. In Indonesia, xenophobic campaigns against Rohingya refugees were driven by "buzzers," influence workers including housewives and political volunteers, who organized their action around a moral obligation to defend the homeland [1].

Analysis: 3 Systemic Approaches That Work

Facing this challenge, structured responses emerge and demonstrate certain effectiveness. A study conducted between 2024 and 2025 by the RAIDAR (Researching Asian Information Disorder and Responses) project emphasizes the importance of a multi-faceted approach [1]. Analysis of existing solutions worldwide allows distinguishing three main pillars: fact-checking by civil society, media and information literacy education for citizens, and regulation of digital platforms by public authorities.

1. Collaborative Fact-Checking: Over 1000 Organizations Worldwide

Fact verification constitutes the first line of defense. Civil society organizations have specialized in verifying claims by public figures and viral content. The International Fact-Checking Network (IFCN), hosted by the Poynter Institute, brings together more than 100 signatories of its code of principles worldwide. In the Philippines, Rappler media initiated a collaborative model that associates journalists, academics, NGOs, and volunteers to identify and correct false information [6]. This verification pyramid allows processing a large volume of content and spreading corrections to a wide audience. In Africa, the independent organization Africa Check, founded in 2012, operates in Nigeria, South Africa, Kenya, and Senegal, producing hundreds of verification reports each year [7]. In Latin America, organizations like Chequeado in Argentina or Lupa in Brazil play a similar role. These initiatives increase the cost of disinformation for those who spread it and provide corrected information to the public. Their impact is however limited by the speed of false news propagation, which often exceeds their reaction capacity. Fact-checking often intervenes after the fact, once content has already reached a large audience.

2. Media Education: The Finnish Model and 10,500 Creators Trained by UNESCO

The most sustainable solution consists of strengthening citizens' capacity to evaluate information. Finland has integrated media and information literacy (MIL) into its national curriculum at all levels, from kindergarten to adult education [8]. This policy, initiated in response to Russian propaganda after 2014, aims to develop critical thinking and skills necessary to navigate a complex information environment. Students learn to identify sources, recognize manipulation techniques, and understand the economic model of platforms. International evaluations show that the Finnish population is best prepared in Europe to resist disinformation. Inspired by this success, UNESCO actively promotes MIL globally. The organization has developed a standard curriculum for teachers and has trained more than 10,500 content creators and journalists in more than 150 countries to deploy these programs and promote quality information [2]. The objective is to create collective immunity against information manipulation, acting on demand rather than only on the supply of fake news.

3. Platform Regulation: 2 Major Laws in Europe and Brazil

The responsibility of technical intermediaries is an increasingly used lever of action. The European Union adopted the Digital Services Act (DSA) in 2022. This legislation imposes strict obligations on very large online platforms (more than 45 million users in the EU) [9]. They must analyze and mitigate systemic risks related to their services, including disinformation spread. The DSA requires greater transparency about the functioning of their recommendation and content moderation algorithms, and obliges platforms to give accredited researchers access to their data. In Brazil, bill 2630, dubbed the "fake news law," aims to regulate platforms and messaging applications. Although its adoption is blocked by intense lobbying from technology giants, it provides for transparency and due diligence measures for platforms, and sanctions in case of failure. These regulatory frameworks aim to force technology companies to assume their role in the health of the information ecosystem, moving from a voluntary self-regulation model to binding legal obligations.

Nuances and Limits: The 3 Blind Spots of Anti-Disinformation Fight

Each of these approaches encounters significant obstacles. First, fact-checking effectiveness comes up against cognitive biases. Social psychology studies show that individuals tend to reject information that contradicts their pre-existing beliefs (confirmation bias). Fact-checking is therefore less effective on already polarized populations. Second, media education is a long-term strategy. Its large-scale deployment requires considerable financial and human investments, as well as strong and lasting political will, which is lacking in many countries. Third, platform regulation raises fundamental questions about freedom of expression. The definition of disinformation is complex and the risk of censoring legitimate but critical discourse is real. Platforms themselves highlight the technical difficulty of moderating billions of content pieces daily in hundreds of languages.

A major challenge remains the spread of disinformation in private and encrypted spaces. Interventions there are technically complex and pose ethical problems and privacy concerns. Finally, the emergence of disinformation carried by "authentic" actors, as documented by the Georgetown University study, poses a new question [1]. When disinformation is not the fruit of a clandestine operation but the expression of sincere conviction, albeit based on erroneous facts, fighting strategies must be rethought to avoid reinforcing the feeling of persecution in these communities.

Perspective: A Technological and Democratic Race

The fight against disinformation will not be won by any miracle solution. Only a combination of approaches, combining the reactivity of fact-checking, the depth of education, and the force of law, can stem the phenomenon. Platform transparency, which regulations like the DSA are beginning to impose, is a necessary condition to allow independent research on propagation dynamics and countermeasure effectiveness. Collaboration between researchers, civil society, journalists, and public authorities is essential.

The central question for coming years is adaptation. AI technologies that enable disinformation creation can also be used to detect it. A technological race is underway. But beyond technology, it is the resilience of democratic institutions, trust in professional media, and citizens' capacity to engage in public debate based on facts that are at stake. The response to the disinformation crisis will therefore be as much democratic as technological, and it will require continuous and coordinated investment on a global scale.

References

- Georgetown University, Georgetown Journal of International Affairs, "Tackling the Disinformation Ecosystem: System-Based Insights from Southeast Asia", 2026. (https://gjia.georgetown.edu/2026/03/20/tackling-the-disinformation-ecosystem-system-based-insights-from-southeast-asia/)

- UNESCO, "World Trends in Freedom of Expression and Media Development Report 2022-2025", 2026. (https://www.unesco.org/en/articles/new-report-unesco-warns-serious-decline-freedom-expression-and-safety-journalists-worldwide)

- World Economic Forum, "The Global Risks Report 2026", 2026. (https://www.weforum.org/publications/global-risks-report-2026/)

- Carnegie Endowment for International Peace, "Countering Disinformation Effectively: An Evidence-Based Policy Guide", 2024. (https://carnegieendowment.org/research/2024/01/countering-disinformation-effectively-an-evidence-based-policy-guide)

- Oxford Internet Institute, "Disinformation Research". (https://www.oii.ox.ac.uk/news-events/oii_tag/disinformation/)

- Rappler. (https://www.rappler.com/)

- Africa Check. (https://africacheck.org/)

- Finnish Government, "Media literacy in Finland".

- European Commission, "The Digital Services Act". (https://digital-strategy.ec.europa.eu/en/policies/digital-services-act)

- Receive Journal analyses directly in your mailbox.

- China's CO2 emissions decreased by 0.3% in 2025. It's a modest figure. But it extends a trend that has lasted 21 consecutive months: since March 2024, emissions from the world's largest polluter have been "stable or declining."

- Does AI destroy jobs? Two studies published in March 2026 — one by Harvard Business School, the other by Anthropic — provide the first solid empirical data. And the answer is more nuanced than public debate suggests.

- Malaria killed 608,000 people in 2022, 95% of whom in sub-Saharan Africa and 78% children under five. And for the first time, a vaccine deployed on a large scale shows measurable results in the field.

- Wanting to be 20 years old today. An independent media that documents progress with rigor, without naivety or catastrophism.

- Structured reading sheets: central thesis, key arguments, limits, and verdict.

- JdP is an independent editorial project based on data, counter-narratives, and lucid optimism. Each article is sourced, nuanced, and open to discussion.

- Le Journal d'un Progressiste uses cookies to improve the reading experience and understand how the site is used. No data is collected for commercial, advertising, or resale purposes. Cookies necessary for site operation are always active. Optional cookies are only activated with your explicit consent, in accordance with GDPR.